The Epic Data Ingestion Solution

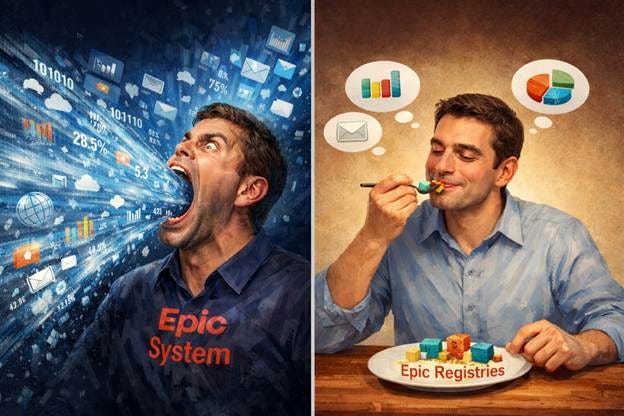

Maybe Epic Organizations Need to Learn to Chew Before Swallowing

I think most people will grasp the concepts in this piece, but be warned: there is a lot of Epic and SQL nerdishness below.

Epic has a couple features that could address healthcare’s data ingestion crisis I called out in my last piece. Very few organizations use them to their full potential.

I’ve been exposed to dozens of Epic implementations. It has always surprised me how organizations spending hundreds of millions (and sometimes billions) of dollars on Epic systems skip some of the key foundational data tools that can help them succeed. The examples I’ve seen include large multihospital health systems, community hospitals, and even ivory tower academic organizations.

Here’s what I find consistently: I estimate that most Epic organizations are leaving well over 90% of the potential of tools called SmartData Elements and Registries on the table. On top of that, almost none have leveraged Metadata infrastructure to govern them.

These aren’t minor features. They’re the potential Epic answer to many of the problems I called out in my last piece: how do you capture, abstract, and leverage the torrents of clinical data healthcare generates daily?

The frustrating thing is that these capabilities have existed for years, even decades.

What SmartData Elements Actually Do

SmartData Elements are data elements generated behind the scenes in Epic that can be connected to multiple contexts:

Did something happen to a patient (ever)?

Did something happen during a specific encounter?

Was something documented in a particular note?

Did a specific condition exist during a time period?

Here’s why this matters: SmartData Elements create reusable, governed data abstractions that can be surfaced anywhere in Epic workflows.

A concrete example:

You are looking to see if ED physicians are using a specific tool for documenting sepsis resuscitation reevaluations. Currently, this might be your workflow:

You tell your physicians the list of things you want them to document after they initiate fluid resuscitation.

You rely on abstractors to manually pore through and read mind-numbing bloated documentation. They document their findings on an external spreadsheet.

You then browbeat your physicians who don’t document properly.

You wonder why your sepsis metrics always stink.

I suspect this process sounds excruciatingly familiar to many of you.

Here is what you can do instead:

Create a SmartText that shows up automatically in the physician’s note template when resuscitation is initiated. It contains all the requisite information.

You attach SmartData behind the scenes to the SmartText.

Because of SmartData, the physicians document seamlessly, naturally, and discretely when they fill out the documentation.

You run reports and show instantaneously that your sepsis reevaluation compliance is over 95% without digging through hundreds of records.

The Problem: Nobody Knows They Exist

Here’s why SmartData Elements are underutilized:

First, operational stakeholders and clinicians don’t know they exist, so they don’t request them.

Second, SmartData requires creativity. You’re not just configuring Epic. You’re designing data abstractions that encode clinical meaning. This requires understanding both Epic’s technical architecture and clinical workflows deeply.

Third, SmartData requires governance. Without it, you get chaos:

Multiple teams creating duplicate SmartData for the same concept

Inconsistent definitions across departments

SmartData Elements that contradict each other

No documentation of what SmartData Elements exist or mean

Technical debt that becomes unmaintainable

Finally, you need expertise to tie it all together: understand that SmartData exists, can create seamless pathways to encode the data, and can build the meta infrastructure to organize and govern it.

This isn’t easy. This is why even large Epic organizations underutilize the tool.

The ICU Monitoring Problem, Revisited

Let’s return to Julio LaTorre’s ICU example with SmartData in mind.

ICU monitors generate continuous data streams. As Brendan Keeler pointed out, Epic can store this waveform data. But storing raw waveforms isn’t the solution.

What you actually need are clinically meaningful abstractions:

“The patient experienced 3 episodes of SVT >180 bpm, with a total duration of 47 minutes.”

“Respiratory rate variability increased 6 hours before clinical decompensation.”

“Hemodynamic instability pattern consistent with early sepsis”

Here’s what SmartData architecture could potentially look like:

Step 1: External Analysis

An ICU monitoring system or a computer vision vendor uses AI to analyze continuous physiological data or images.

The system identifies clinically relevant patterns and generates structured abstractions.

Step 2: SmartData Creation

Create SmartData Elements for each meaningful abstraction.

“Significant ventricular tachycardia during encounter”

“Early sepsis hemodynamic pattern”

“Respiratory decline warning flag”

Step 3: Integration

External system populates SmartData through Epic APIs.

SmartData surfaces automatically in:

ICU flowsheets

Clinical handoff reports

Sepsis screening tools

Quality dashboards

Research databases

Step 4: Governance

Clinical informatics validates definitions.

IT maintains integration pipelines.

Quality teams monitor data accuracy.

Documentation explains clinical meaning.

This is the analog of the natural gas capture infrastructure I noted in my previous fracking metaphor. You’re not forcing Epic to become a data lake. You’re building the abstraction layer between data lakes and clinical workflows.

Epic Registries: Where SmartData Gets Powerful

Registries are better understood than SmartData but still dramatically underutilized.

Epic Registries are rule-based data structures that pull from multiple Epic sources to create longitudinal patient views and enable population-level queries. They’re delivered by Epic with vanilla content for common use cases (diabetes, heart failure, etc.).

But here’s what organizations miss: Registries can capture and aggregate almost any combination of data that is discretely available in Epic’s Chronicles database. This includes SmartData Elements.

And here’s one thing that really surprises me: I’ve never seen anyone fully flesh out the encounter-based Inpatient and Emergency Department registries.

This is odd because so many quality initiatives revolve around inpatient and ED populations:

Early sepsis recognition and management

Hospital-acquired conditions (pressure ulcers, falls, infections)

ED revisit rates and bounce-backs

Acute decompensation recognition

Medication reconciliation failures

Discharge readiness assessment

All of these require synthesizing data across the encounter: what happened in the ED, what treatments were given, what complications occurred, and what was the discharge condition?

Inpatient and ED Registries can be purpose-built for this. But organizations stick with vanilla Epic content and never customize it with SmartData that captures their specific quality priorities.

The Registry Paradox: Building the Same Logic Twice

Here’s a pattern I see constantly:

The Traditional Approach:

The clinical team identifies a quality problem (e.g., early readmissions for heart failure).

The request goes to the data/BI team: “We need to identify high-risk patients.”

The data team spends weeks writing complex SQL queries against Caboodle.

Queries pull from multiple tables, apply inclusion/exclusion logic, and calculate risk scores.

The BI dashboard gets built, showing high-risk patients.

Clinical team reviews dashboard, gains insights

The clinical team wants to act: “Can we trigger an alert in Epic for high-risk patients?”

Now IT/clinical informatics has to reconstruct the entire logic in Epic.

Rebuilding takes weeks because Epic’s Chronicles data structure differs from SQL.

By the time it’s operational, the glacier has moved and we are on to another project.

The problems with this approach:

Problem 1: Laborious Duplication You’ve essentially built the same clinical logic twice. Once in SQL for analytics. Once in Epic for operations. There are differences in languages, data structures, and maintenance requirements.

Problem 2: Long Lag Times The data team builds queries (2-4 weeks). The clinical review and refinement process takes 1–2 weeks. The entire process, from the epic build to operationalization, takes 2-4 weeks. You’re 2-3 months from insight to action.

Problem 3: Logic Drift SQL queries and Epic logic inevitably diverge over time. The data team updates the query. The Epic team doesn’t get notified. Now your dashboard shows different patients than your Epic alerts flag. Nobody trusts either one.

Problem 4: Scaling You want to expand from heart failure to COPD, sepsis, and diabetes. Now you’re maintaining 4 separate SQL query sets and 4 separate Epic builds, all drifting independently.

The Registry Solution: Build Clinical Logic Once

Here’s what properly implemented Registries enable:

The Registry Approach:

Build a Heart Failure Registry in Epic with inclusion criteria, risk stratification logic, and relevant SmartData Elements

Registry logic runs continuously in Epic’s operational database.

The same registry automatically populates Caboodle with structured, pre-curated data.

The data team queries the Registry table in Caboodle (simple SQL: SELECT * FROM HeartFailureRegistry WHERE RiskScore > 80).

BI dashboards pull from the Registry table.

The clinical team reviews insights.

Want to operationalize? Registry logic already exists in Epic.

Build OPA alerts, reports, or workflows using the same Registry criteria already defined.

One source of truth. One logic set. The analytics and operations are in sync.

Why this matters:

Registries are essentially modular subqueries that work in both operational Epic and analytical Caboodle.

You define the clinical logic once:

Who qualifies for the registry? (inclusion/exclusion criteria)

What risk stratification applies? (calculated fields, SmartData)

What outcomes are we tracking? (events, interventions, results)

What time periods matter? (encounter-based date ranges)

This logic then:

Runs continuously in operational Epic

Populates structured Registry tables in Caboodle

Provides clean, governed data for analytics

Enables rapid operationalization of insights

The SQL Advantage

If you’ve curated good Registries, your data science work becomes dramatically simpler.

Without Registries:

SQL

-- Data team’s Gordian Knot query

SELECT DISTINCT p.patient_id, p.name,

-- 15 lines calculating readmission risk

-- 20 lines pulling medication adherence

-- 10 lines for recent vitals

-- 8 lines for comorbidities

-- Complex date logic

-- Multiple table joins

-- Exclusion criteria buried in WHERE clauses

FROM patient p

JOIN encounter e ON ...

JOIN diagnosis d ON ...

JOIN medication m ON ...

-- The query is 200+ lines and takes 3 weeks to write.

-- breaks when there are changes minor changes in table structures

With Registries:

SQL

-- Data team’s dream query

SELECT patient_id, name, readmission_risk_score,

medication_adherence, recent_decompensation

FROM HeartFailureRegistry

WHERE readmission_risk_score > 80

AND last_encounter_date > CURRENT_DATE - 30

-- The query is 5 lines and takes 5 minutes to write.

-- survives Epic upgrades because Registry abstracts table changes

The Registry has already done the heavy lifting:

Applied inclusion/exclusion criteria

Calculated risk scores

Pulled relevant clinical data

Structured it in a queryable format

Your data team goes from 3 weeks of SQL development to 5 minutes.

The Data Validation Advantage

Here’s another critical benefit organizations miss: Registries make data validation dramatically easier.

The Data Warehouse Validation Problem:

Your data team builds a complex SQL query identifying sepsis patients. The query pulls from 15 different Caboodle tables, applies intricate logic, and produces a list of 247 patients.

A clinician reviews the list and says, “Patient Johnson shouldn’t be on here. She didn’t have sepsis.”

Now what?

The data analyst has to:

Find Patient Johnson in Caboodle (patient_id = some obscure number).

Trace back through the query logic to see why she qualified.

Check each of the 15 source tables to see what data triggered inclusion.

Figure out which Epic workflow generated that data.

Possibly discover the data is wrong in Caboodle because of an ETL issue.

Or discover the query logic is flawed.

Fix the issue and rerun the query.

Hope the fix didn’t break something else.

The validation process for a single patient can take several hours or even days.

The Registry Validation Advantage:

With a Sepsis Registry built in Epic:

Search for Patient Johnson directly in Epic.

Open her chart.

Open the Registry editor with the chart open.

The Registry editor surfaces the logic right there—showing each criterion and whether the patient passed or failed.

See exactly which criteria triggered her inclusion (was it the lactate value? The vital signs? The diagnosis code?) Testing is literally 2 clicks.

Click through to the source documentation in the chart.

Identify whether the logic is correct or the data/workflow is wrong.

Fix the issue (Registry logic, workflow design, or Epic configuration).

Registry automatically recalculates.

See the corrected result immediately.

Validation time: minutes, not days.

Why this matters:

Epic Registries operate on operational data, not warehouse data. You’re closer to the source. You can see the actual patient chart, the actual documentation, and the actual workflows that generated the data.

The Registry editor shows you pass/fail status for each criterion while you’re looking at the patient’s actual clinical data. No breadcrumb trails through obscure data warehouse tables. No translating between Caboodle’s data structures and Epic’s clinical workflows. No mysterious ETL transformations that might have corrupted data.

You’re testing on real patients in their actual clinical context with the logic transparent right in front of you.

A Real Example:

If you’ve followed my writing, you know of my recent project building looking at coprescribing of benzodiazepines and narcotics. We noticed an inordinate number of patients who fell into the inclusion criteria. Because the criteria were put together in a registry record, we could rapidly test people we thought should be outliers. Within 15 minutes, we realized the problem: there were no end dates on benzodiazepine orders that were placed years ago, and these patients were included. This facilitated us putting in a fix, which took 30 seconds.

With a Caboodle-only approach: The data team would spend days tracing through a maze of joins in Caboodle tables to find the same answer. Then, we would have to engineer a WHERE clause from the same join maze to accomplish the same thing.

The Three Types of Problems Registries Reveal

This is critical: Registry validation exposes three different problem types that warehouse queries have difficulty surfacing quickly:

Problem Type 1: Logic Issues

Registry criteria are wrong.

Clinical definitions don’t match operational reality.

Inclusion/exclusion logic needs refinement.

Fix: Modify Registry logic

Problem Type 2: Workflow Issues

Documentation isn’t happening where expected.

Staff aren’t following defined workflows.

Required fields aren’t being completed.

Fix: Workflow training, process improvement

Problem Type 3: Epic Configuration Issues

Required fields aren’t actually required in Epic build.

Flowsheets missing key data elements

SmartData is not capturing intended information.

Fix: Epic configuration changes, build updates

Caboodle queries hide which type of problem you have. Everything looks like “bad data” or “bad query logic.”

Registries with the editor open alongside charts reveal: “The basic logic is fine. The medication orders are documented poorly. Adjust the logic to fit the reality of the data.”

That’s operational intelligence you can actually act on.

The Operationalization Advantage

But here’s where it gets really powerful: when insights lead to action.

Your data team discovers that heart failure patients with:

Readmission risk score >80

Medication adherence <70%

Recent weight gain >5 lbs.

Have 40% readmission rates vs. 8% for others.

You want to operationalize: trigger a care coordination intervention for high-risk patients.

Without Registries: The Epic team has to rebuild all that logic within Epic from scratch. It requires different tools, different data structures, and weeks of development.

With Registries: The logic already exists in the Registry. Build a report that filters on those Registry fields. Configure an Our Practice Alert (fka BPA) that fires when Registry criteria are met. Create a patient list or dashboard for care coordinators that pulls from the Registry.

Development time: days, not weeks. This is because you are utilizing pre-existing and thoroughly tested blocks of logic.

The Governance Multiplier

This gets even more powerful with proper governance.

When you have well-curated Registries:

Data definitions are standardized - “Readmission risk score” means the same thing in analytics and operations.

Logic is centrally maintained - Update the Registry once, changes propagate everywhere.

Audit trails exist - You can see when and why Registry logic changed.

Documentation is required - Each Registry has clear inclusion criteria and field definitions.

Validation is systematic - Clinical teams verify Registry populations regularly.

Without Registries, each team maintains their own version of “heart failure patient.” Definitions drift. Nobody knows which version is authoritative. Insights can’t be operationalized because “our SQL logic doesn’t match Epic’s logic.”

With Registries, you have one governed source of truth that serves both analytics and operations.

Why Organizations Don’t Do This

If Registries solve the double-build problem, why don’t organizations use them?

Same reasons as SmartData:

Few people know this is possible – There has not been a critical mass of organizations that have leveraged this, so it isn’t a well-known approach. Operational teams simply don’t ask for this.

Siloed teams - Data/BI reports to finance or strategy. Clinical informatics reports to IT or CMO. Nobody has accountability for “build clinical logic that serves both analytics and operations.”

Different skillsets - Data analysts know SQL but not Epic’s Chronicles. Epic analysts know Chronicles but not data science. Fully leveraging Registry architecture requires both.

Implementation momentum - Epic implementations focus on core workflows. Registries are “phase 2” that never happens. It gets relegated to the dreaded “optimization” black hole of project planning.

Lack of governance - Building good Registries requires clinical, IT, and analytics teams to agree on definitions, logic, and maintenance. This task is extremely challenging and necessitates not only an expert in managing diverse teams but also someone who possesses knowledge in data science.

So organizations build the same clinical logic twice. Maintain it in two places. Watch it drift. Complain about the lag between insight and action.

Meanwhile, the potential of Epic Registries sits there, designed specifically to solve this problem.

A Real Example: Surgical Quality Registries

The American College of Surgeons just announced a collaboration with Epic for a new surgical clinical registry (announced January 2025). This is potentially transformative for surgical quality improvement.

But here’s the reality: the AHA created a similar stroke registry years ago. It atrophied from low utilization.

The ACS-Epic registry has the potential to avoid this trap by:

Capturing data automatically during surgical workflows

Leveraging SmartData and other discrete data tools for abstracted surgical outcomes

Once the abstraction occurs, we can:

Mingle the registry with other data in Epic’s data warehouse (Caboodle) and eventually Cosmos.

Surface insights back into clinical decision-making and frontline workflows

Imagine, then, combining the ACS registry with surgical AI tools that can analyze OR video and physiological data:

The Architecture:

The AI tools analyze surgical videos and intraoperative vitals.

AI identifies concerning patterns (blood loss, tissue handling, and hemodynamic instability).

SmartData Elements encode: “Time under anesthesia,” “Intraoperative blood transfusion,” “Operative approach.”

SmartData and other data (like Epic’s Event records) automatically populate the ACS surgical registry.

Registry data flows to Caboodle for outcomes tracking.

Insights surface in Epic’s surgical navigator and post-op handoff.

Population-level analysis identifies predictors of complications.

Feedback loop: AI models improve with real-world outcomes data.

I think this sort of learning system is what Julio LaTorre wants our healthcare IT systems to do. The technology exists. The registry exists. The integration pathways exist.

What’s missing: the expertise and organizational appetite to tie all these steps together: architect SmartData abstractions, govern the integrations, and build the feedback loops.

The Complete SmartData Architecture

Here then is what this looks like at a high level:

Layer 1: Data Generation

Epic native data (documentation, orders, results)

External devices (ICU monitors, surgical systems, wearables)

Third-party systems (labs, imaging, patient-reported outcomes)

AI/ML tools (computer vision, sepsis prediction, image analysis)

Layer 2: Abstraction (SmartData Elements)

Define clinically meaningful data abstractions.

Create SmartData Elements for each abstraction.

Build a governance framework for SmartData creation.

Document clinical definitions and use cases.

Layer 3: Integration

External systems populate SmartData through APIs.

SmartData surfaces in Epic workflows automatically.

SmartData flows to appropriate Registries.

Data governance monitors quality and consistency.

Layer 4: Synthesis (Epic Registries)

Registries combine Epic native data with SmartData.

Create longitudinal patient views across encounters.

Enable cross-domain population queries.

Support quality reporting and clinical research.

Layer 5: Analytics & Learning

Caboodle for Epic-centric analytics

Cosmos (Epic’s data lake) for advanced ML/AI

Research databases for clinical trials

Quality dashboards for continuous improvement

Layer 6: Feedback Loop

Insights from analytics inform clinical workflows.

AI models improve with real-world outcomes.

SmartData definitions refined based on usage

Registries expanded to new use cases.

Epic doesn’t need to be everything. Epic needs to be the workflow integration layer that connects everything.

The Governance Imperative

This only works with proper governance.

Organizations need:

SmartData governance committee - Clinical informatics, IT, and quality teams reviewing all new SmartData Elements

Naming conventions - Consistent, searchable SmartData naming

Clinical validation - Clear definitions of what each SmartData Element means clinically

Documentation standards - Every SmartData Element documented with use cases, clinical meaning, and data sources.

Version control - Changes to SmartData definitions tracked and communicated

Audit processes - Regular review of SmartData accuracy and utilization

Integration oversight - External data sources validated for clinical appropriateness

Without governance, you get chaos: multiple teams building duplicate SmartData, conflicting definitions, and technical debt that becomes unmaintainable.

Epic provides the technical capability. Organizations must provide the governance discipline.

This is why organizations need experts who understand SmartData exists, can architect the abstractions, and can build the meta infrastructure to organize and govern it.

The ACS Registry as Bellwether

I believe the ACS-Epic surgical registry will be a test case.

If organizations approach this as “turn on the registry and we’re done,” it will fail like the AHA stroke registry. Data capture will be incomplete. Surgeons won’t see value. Utilization will drop.

If organizations approach this as “build Epic architecture to automatically capture surgical abstractions from multiple sources, including AI tools, then leverage the registry as the synthesis layer,” it could transform surgical quality improvement.

The difference isn’t Epic’s technology. The difference is organizational sophistication about data architecture and data capture.

What This Means for AI in Healthcare

Julio La Torre argues Epic can’t support AI-enabled healthcare. I think he’s looking at the wrong level.

Epic doesn’t necessarily need to build the AI models for every miniscule piece of data out there. Epic needs to be the integration layer that:

Receives AI-generated insights via SmartData

Surfaces those insights in clinical workflows

Enables clinicians to act on insights without leaving Epic

Captures outcomes for AI model improvement

Synthesizes AI insights with other clinical data in Registries

Organizations that can build SmartData and Registry infrastructure now can create the bridge to iteratively nimble AI-enabled healthcare.

Organizations waiting for “Epic’s replacement” will wait forever. And when that replacement comes (if it comes), they’ll rebuild from scratch. This is because they never created the data abstractions necessary for transferring to new systems.

The Truth About Epic “Limitations”

Epic isn’t your limitation. Your expertise is.

SmartData Elements have existed for over a decade. Registries even longer. The ACS surgical registry provides a ready-made framework. Integration APIs exist.

What’s missing: experts who can architect SmartData abstractions, build governance frameworks, and create the meta infrastructure to make this sustainable.

This expertise is rare. Epic training doesn’t teach it. Implementation partners focus on core builds. Most organizations don’t even know what questions to ask.

The organizations that figure this out won’t just survive the AI transition. They’ll lead it. This is because they will have the infrastructure necessary to integrate any emerging AI capabilities.

Next article: Why clinicians won’t leave Epic even when AI gets excellent. And why that’s actually the right outcome.

John Lee is an emergency physician and Epic consultant who learned these lessons through direct implementation experience, including creating the exact problems he now helps organizations avoid. He posts frequently on LinkedIn.

Great write up John, thanks for putting this all together. I agree it seems like a bigger problem than it needs to be when systems have access to tools they do not use well.